Safe AI Use and Security Guidance Employees Actually Need

Your Employees Are Already Using AI. The Question Is Whether They're Doing It Safely.

Picture this. Your marketing manager is drafting a client proposal and pastes some customer data into ChatGPT to help shape the narrative. Your developer is using GitHub Copilot to speed up a sprint. Your finance analyst is running a quarterly summary through an AI tool they found online. None of it is malicious. All of it is happening right now. And none of it has been cleared by IT.

A recent survey found that 63% of organizations lack formal AI governance frameworks, even as employees across every department feed sensitive data into publicly available AI tools. The gap between what's happening and what's been approved is widening fast, and the costs of that gap are real. AI-related security incidents now cost organizations an average of $670,000, and the most common cause isn't a sophisticated cyberattack. It's an employee who was trying to be productive.

The answer isn't to ban AI. Your competitors will quietly thank you if you do. Instead, the answer is to get ahead of the curve by building clear, practical guardrails that protect your organization while letting your people take full advantage of what AI can do. That starts with an AI acceptable use policy, and this post is your guide to building one.

Why Every Small Business Needs an AI Acceptable Use Policy

Think of an AI acceptable use policy as a corporate seatbelt. It doesn't slow the car down or limit where you can go. It simply makes sure that when something unexpected happens, you're not launched through the windshield.

An effective AI policy does three things well. First, it establishes governance by defining who owns AI decisions, which tools are approved, and where access boundaries sit. Without this clarity, AI sprawl creates coordination chaos as different teams adopt incompatible tools with no central visibility. Second, it reduces risk by creating clear rules around data handling, before those rules get tested by an incident. And third, it enhances productivity. When employees know exactly what's approved and what's off-limits, they can use AI confidently without second-guessing every decision or, worse, avoiding tools that could genuinely help them.

The businesses that get this right aren't the ones who move the slowest. They're the ones who move deliberately, with enough structure to accelerate without crashing.

The 4 Pillars of Safe AI Use for Employees

Pillar 1: Humans Stay Accountable for Everything AI Produces

This is the non-negotiable, foundational principle. No matter how good the AI output may look, a competent human must always review, validate, and take responsibility for it before it goes anywhere.

AI can draft the contract, analyze the dataset, or generate the report, but a human must review it, catch the errors, and approve the final version. That's not bureaucracy. That's basic risk management, because AI systems hallucinate, inherit biases from training data, and misunderstand context in ways that aren't always obvious on a first read.

The human-in-the-loop requirement ensures that when AI gets something wrong, as it inevitably will, there's a person in place to catch it before it becomes a costly mistake. Embedding this principle into your culture is as important as embedding it into your policy document.

Pillar 2: Be Transparent About When and How AI Is Involved

Employees have to be upfront about the use of AI in their work. This isn't about creating bureaucratic paper trails. Rather, it's about building the kind of internal and external trust that makes AI adoption sustainable.

In practice, transparency means disclosing when AI assisted in creating content, documenting which tools processed which data, being clear with clients about AI involvement in deliverables, and maintaining audit trails for AI-assisted decisions. This matters because organizations that practice transparency choose when and how that disclosure happens. Organizations that don't practice it risk having their AI use discovered rather than disclosed, which is a very different and much more damaging conversation to have with a client or regulator.

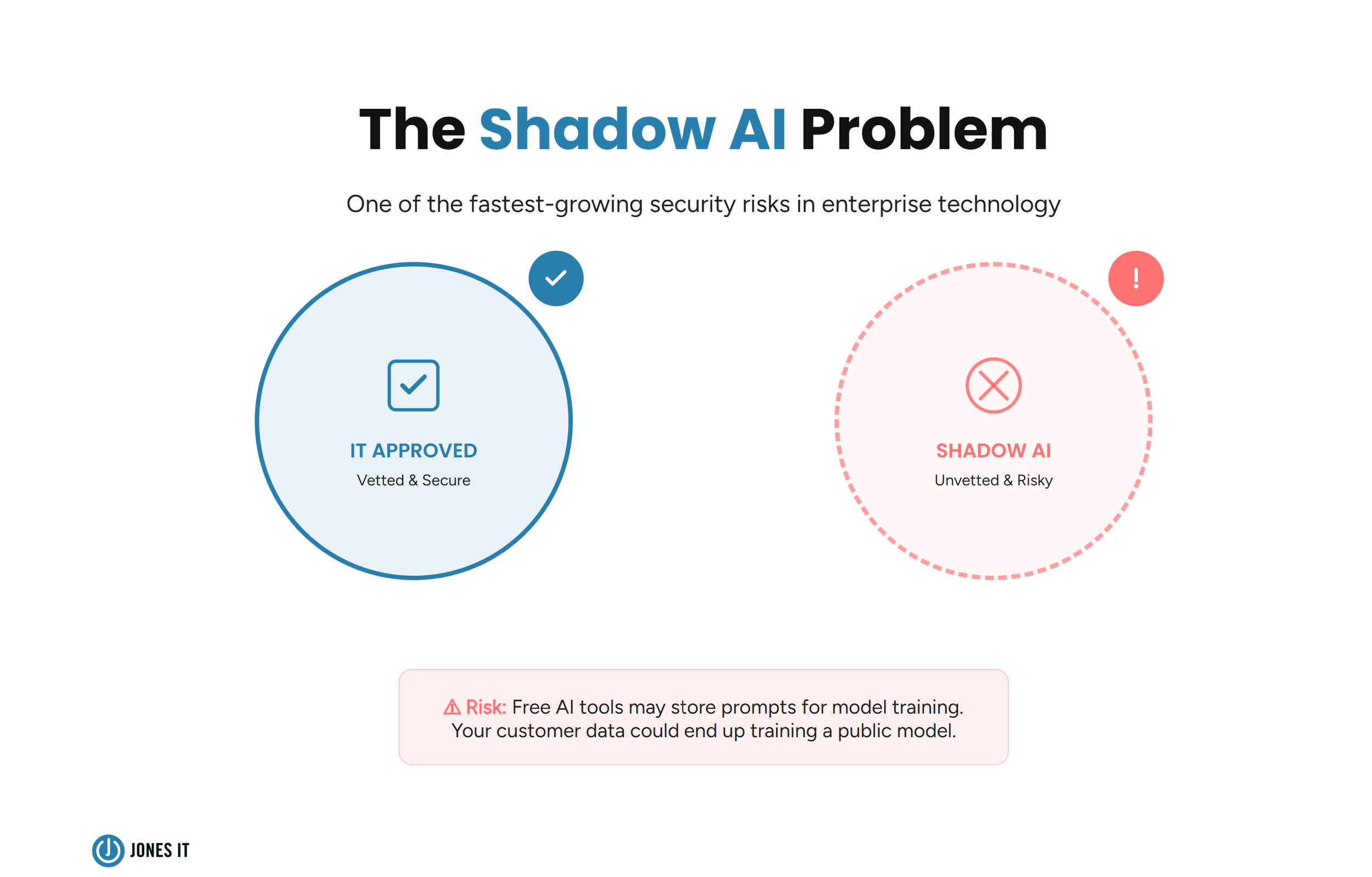

Pillar 3: Shut Down Shadow AI Before It Shuts Down You

For a majority of organizations, the most critical gap in security policy is often here: employees must only use AI tools that have been pre-approved and officially vetted by IT. All other AI tools, regardless of their popularity or apparent safety, are strictly forbidden for use with company data.

Shadow AI refers to AI tools used inside an organization without IT oversight, and it's one of the fastest-growing security risks in business today. The problem is uncertain. Many free AI tools store user prompts to improve their models. This means that the customer data your team uploads to summarize a report, or the source code a developer runs through an AI assistant, may be retained and used to train a public model. Although it's not a breach in the traditional sense, yet the data has still left your control, and you may never know.

Beyond data exposure, your AI acceptable use policy should draw clear lines around specific prohibited actions, such as using AI to make hiring or performance evaluation decisions without explicit approval, creating deepfakes or synthetic media, automating outreach in ways that violate platform terms of service, and processing any company data through unapproved tools. Everyone needs to understand that the IT vetting process exists to evaluate a tool's data handling practices, security certifications, and compliance with regulations like GDPR and CCPA before any of it touches company data. That process is the difference between a managed risk and an unmanaged one.

Pillar 4: Watch for Bias and Discriminatory Outputs

AI systems can perpetuate and even amplify biases that may be present in their training data, often in ways that aren't immediately visible. This makes bias vigilance an ongoing responsibility, instead of a one-time review.

Employees should be actively watching for outputs that seem to disadvantage particular groups based on protected characteristics. This is especially critical in any area where AI is involved in decisions affecting people directly, including hiring and recruitment, performance evaluations, customer credit decisions, and service prioritization. If an output raises a flag, it's a signal for human review before any action is taken, and the flag itself should be documented so that patterns can be identified over time.

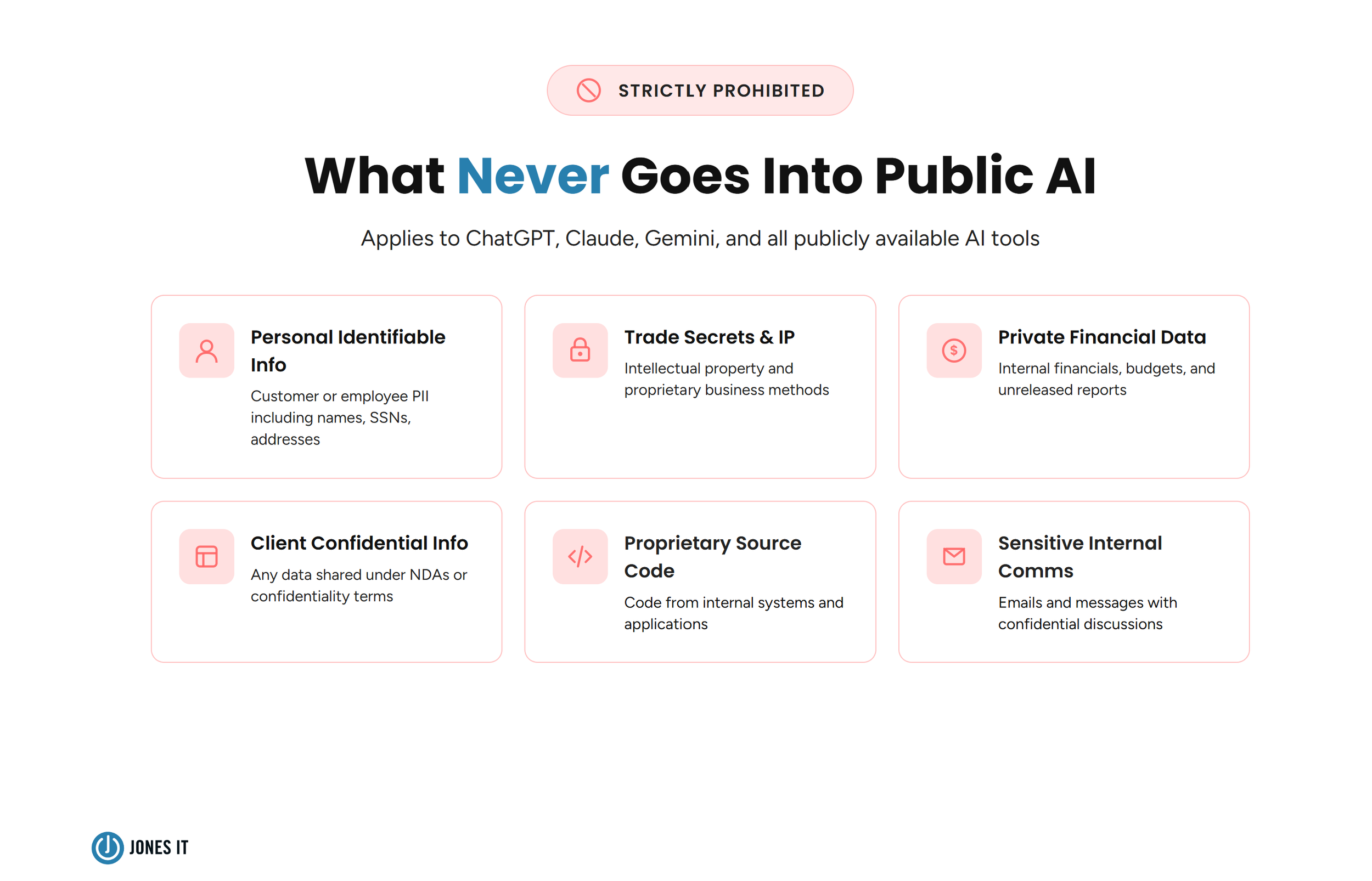

AI Data Security: What Should Never Go Into a Public AI Tool

Protecting sensitive information is the most urgent security requirement of the AI era, and the rules here need to be explicit rather than implied. Your AI security framework should make these prohibitions clear to every employee, regardless of their technical background.

No employee should ever enter any of the following into a publicly available AI tool: personally identifiable information (PII) about customers or employees, trade secrets or intellectual property, private company financial data, client confidential information, source code from proprietary systems, or internal communications containing sensitive discussions. That applies to ChatGPT, Claude, Gemini, and every other publicly available AI system, regardless of how well-known or trustworthy those tools appear.

Beyond the prohibitions, there are three practical data protection habits that every AI-using employee should develop.

The first is anonymization. Whenever data needs to go into an AI tool, strip it of all identifying information first, removing names, account numbers, and any other details that could link the data back to a specific person or company. This simple step dramatically reduces exposure even when working with approved enterprise tools.

The second is access controls. Sensitive AI systems should be protected by multi-factor authentication, role-based access controls, and encrypted communication channels. These aren't extras for organizations handling particularly sensitive data. They're table stakes for any business using AI at work.

The third is using enterprise AI tools wherever possible. Tools that have been specifically built for business use with established data agreements are meaningfully safer than their public equivalents, because the data agreements define what the provider can and cannot do with your information. If your organization hasn't yet adopted enterprise AI tools, that conversation needs to be escalated to leadership.

Building an AI-Ready Workforce

Policies only work when people understand them. And beyond compliance, employees who genuinely understand how AI works, what it can get wrong, and why certain data should never touch a public tool are simply better at using AI safely. That's why education is a pillar of safe AI adoption, not just an accompanying nice-to-have.

Regulations are also starting to formalize this expectation. The EU AI Act increasingly requires companies to provide employees with basic AI literacy as part of their compliance obligations, and similar requirements are emerging in other jurisdictions. So building a well-trained workforce isn't just good practice. In many cases, it's becoming mandatory.

Effective AI training covers five things: how AI systems process and potentially store data, recognition of AI limitations and failure modes, company-specific policies on approved tools and prohibited uses, procedures for reporting concerns or policy violations, and the regulatory requirements that apply to each employee's specific role. That last point matters more than most organizations realize. The compliance obligations of someone processing healthcare data are different from those of someone in marketing, and training should reflect that.

Given how rapidly AI capabilities are evolving, ongoing training programs are essential. Single one-time workshops become obsolete quickly in a field that looks meaningfully different every six months. Organizations that build continuous learning into their AI culture rather than treating training as a box to check find that their employees become genuine assets in managing AI risk rather than potential liabilities.

For the compliance side, your training program should align with your broader AI risk management framework and account for GDPR, CCPA, and the evolving patchwork of state-level AI regulations that are emerging across the US.

How to Make Your AI Policy Mean Something

The most well-written policy in the world does nothing if people treat it as a suggestion. That's why clear enforcement procedures are as important as clear policy language.

Your organization needs defined channels for reporting violations, whether that's a data leak, a harmful AI output, or an employee who discovered a colleague using an unapproved tool. And violations need to carry consequences proportional to their severity. A first-time mistake using an unsanctioned tool for non-sensitive work calls for different handling than deliberately uploading customer PII to a public AI system. That proportionality matters because it signals that the policy is calibrated to actual risk rather than blanket punishment, which makes it far more likely that people will take it seriously.

The goal of enforcement isn't punitive. It's creating the kind of accountability culture where data protection becomes everyone's job, not just IT's.

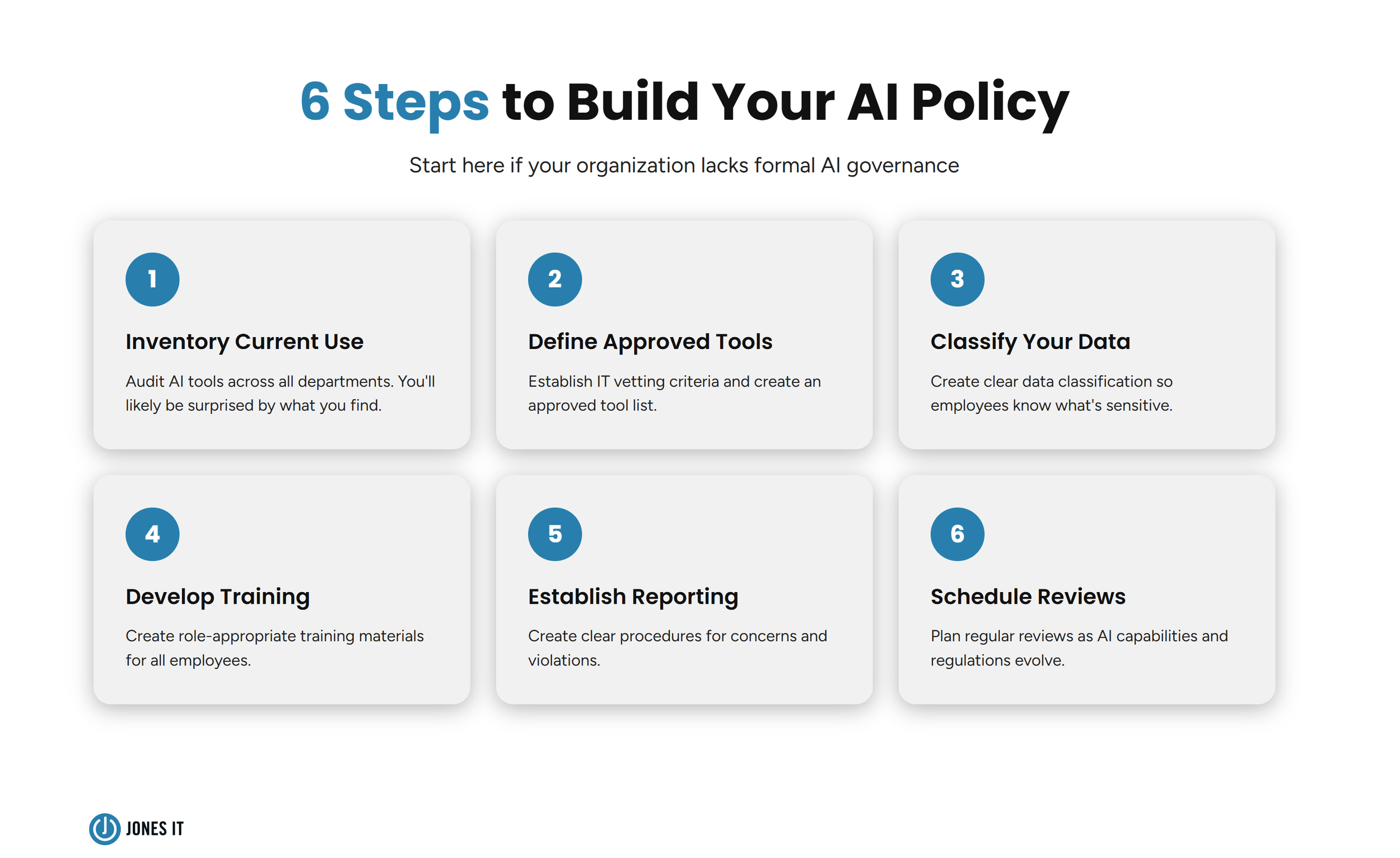

6 Steps to Build Your AI Acceptable Use Policy Right Now

If your organization doesn't yet have formal AI governance in place, start with these six steps. They won't take months to execute, and the protection they provide starts the moment you begin.

Inventory current AI use across departments (you'll likely be surprised).

Define approved tools and establish IT vetting criteria.

Create a clear data classification so that employees know what's sensitive.

Develop training materials appropriate to different roles.

Establish reporting procedures for concerns and violations.

Schedule regular reviews as AI capabilities and regulations evolve.

Successful AI adoption isn't about moving faster than everyone else. It's about building governance that enables safe scaling. Your employees want to use AI to do better work. Give them the guardrails that let them do exactly that, without putting the company at risk in the process.

Need help building AI governance that enables innovation while protecting your organization? We've helped hundreds of Bay Area businesses develop AI policies that actually work in practice. Reach out to schedule a free consultation.