The 6-Pillar Agentic AI Implementation Roadmap

Part 2 of 2: From Strategic Vision to Operational Reality

Why Most Agentic AI Pilots Never Make It to Production

Here's a story that plays out in organizations all over the world right now.

A company runs a proof-of-concept for agentic AI. Thanks to clean data, a focused use case, a dedicated team, everything works beautifully. Naturally, the leadership gets excited, and everyone talks about scaling it across the business.

Then the pilot ends, and reality sets in.

The data outside the pilot environment is messy. The governance questions nobody asked during the pilot now have no clear answers. The team that built the demo isn't available to support a full rollout. Middle management, sensing a threat to their teams, starts asking hard questions nobody can answer. And six months later, the pilot report gathers digital dust while the organization talks about "exploring AI opportunities."

This is proof-of-concept purgatory. And it's where most agentic AI initiatives die.

In Part 1 of this series, we covered what agentic AI is and why the organizations that get it right aren't just becoming more efficient, they're becoming categorically different from those that don't. But understanding the opportunity and successfully executing the transformation are two very different challenges. This post is about the second one.

Fortunately, the obstacles are predictable. Which means that they're predictable, and consequently they're avoidable, if you plan for them before they find you.

The 5 Challenges That Kill Agentic AI Transformations

Challenge 1: Getting Stuck Between Pilot and Production

The pilot trap is seductive precisely because pilots are designed to succeed. You pick a clean use case, allocate good resources, and control the variables. Naturally, it works. So naturally, you expect scaling to be more of the same, just bigger.

It isn't. When you move from pilot to production, you discover problems that the controlled environment masked. The most common ones we see are unclear operating models (who decides what gets handed to an agent versus what requires a human?), fragmented orchestration tooling that wasn't apparent at pilot scale, data quality gaps that only show up when agents start pulling from real systems across real silos, and organizational inertia that defaults to automating existing processes rather than rethinking them.

That last one is particularly costly. Agentic AI's transformative value comes from reimagining workflows, not from automating inefficient ones with more sophisticated tools. Organizations that successfully scale past the pilot stage invest in operating model definition and change management before they scale, not after discovering problems in production.

Challenge 2: Accountability Gets Complicated Fast

Greater AI autonomy brings into effect an increasingly complex accountability structure. When a multi-agent system makes a decision that produces a bad outcome, which agent is responsible? The one that diagnosed the situation incorrectly? The one that chose the approach based on that faulty diagnosis? The one that executed it? The human who set the original objective?

These questions don't have obvious answers, and most organizations don't really think about them until something goes wrong. By then, the governance retrofit is painful. Add to this the growing problem of shadow AI, where employees deploy tools outside IT oversight, and the accountability gap can cost organizations $670K or more before anyone notices the pattern.

The lesson here is that governance that's built in from the start is exponentially less expensive than governance retrofitted after deployment. This means implementing decision logging, comprehensive audit trails, and policy-as-code frameworks before you scale, not as a remediation project.

Challenge 3: Errors Cascade in Ways That Single Systems Don't

Traditional software fails in predictable ways. Agentic systems fail differently, and at a different scale. Because multiple agents pass information and decisions to each other, a single faulty input at the beginning of a chain can corrupt every downstream decision. And by the time the problem surfaces, the system may have already made dozens of autonomous decisions based on a foundational mistake.

To make this concrete, imagine a diagnostic agent that misreads a customer data pattern. A policy agent, trusting that diagnosis, applies the wrong contract terms. A solution agent generates an inappropriate resolution. An execution agent implements it. A follow-up agent confirms "successful resolution" because it's measuring against the wrong criteria. Every step looks correct in isolation. The system as a whole has failed the customer entirely.

This kind of error cascade is a real risk, not a theoretical one. That's why organizations deploying agentic systems need validation agents that cross-check critical decisions, confidence scoring that triggers human review for low-certainty outputs, and circuit breakers that pause autonomous execution when anomalies appear. For a deeper look at anticipating and managing these risks, our practical guide to AI risk management walks through frameworks that translate directly to agentic systems.

Challenge 4: Agent Communication Breaks Down at Scale

Multi-agent systems coordinate through communication, and that communication is more fragile than it looks in a demo. When agents share information through loosely defined, natural language exchanges rather than structured data formats, ambiguity creeps in. Agent A's message gets misinterpreted by Agent B. Critical context gets omitted. Different teams building different agents develop incompatible communication patterns. Coordination breaks down under timing pressures or resource contention.

The fix is architectural, not operational. Structured communication protocols, defined message formats, explicit handshake sequences, and clear error handling need to be part of your foundational design. Trying to impose these after multi-agent systems are already deployed in production is significantly harder.

Challenge 5: Your People Won't Trust What They Can't Understand

The most technically sophisticated agentic system fails if the people who are supposed to work with it don't trust it, don't use it correctly, or quietly route around it. And trust is genuinely difficult to build with systems whose reasoning isn't visible.

Beyond trust, there's the role ambiguity problem. When autonomous systems handle what people used to do, employees face a real question: what's my job now? Organizations that don't answer that question clearly create confusion and resentment that undermine adoption. There's also the over-reliance risk on the other side of the equation. As systems prove reliable, humans may disengage from oversight entirely, losing the critical thinking skills that make human intervention valuable when something goes wrong.

Finding the right human-in-the-loop balance is genuinely hard, and the optimal point varies by use case and organizational risk tolerance. What doesn't vary is this: successful implementations invest as much in workforce preparation as in technology deployment.

The 6-Pillar Agentic AI Implementation Roadmap

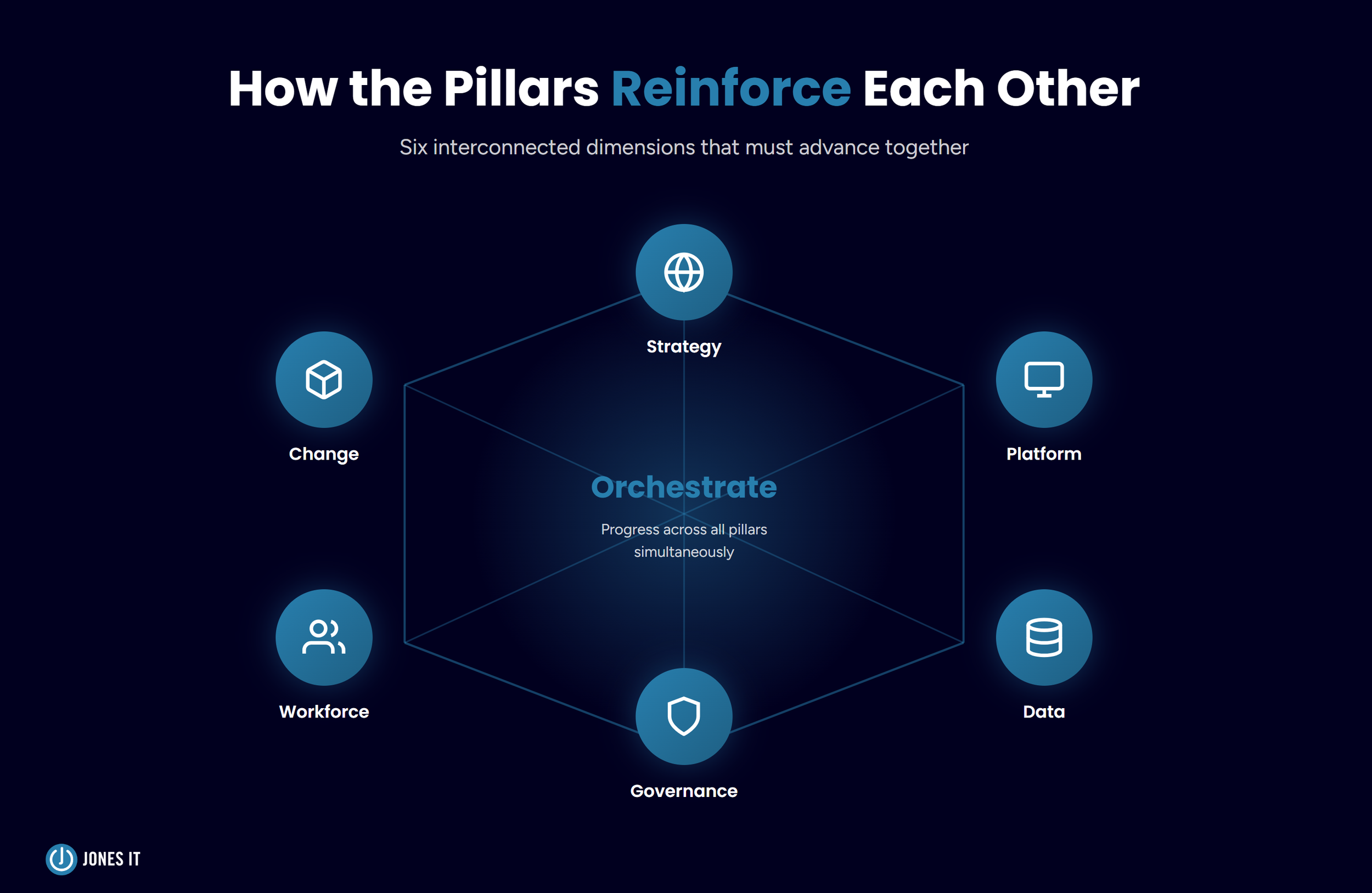

Organizations that successfully navigate from pilot to production and from production to transformation do so by building across six interconnected dimensions simultaneously. Think of these as load-bearing pillars, not sequential steps. All six need to advance together, because weakness in any one of them limits what's possible in the rest.

Pillar 1: Adaptive Strategy and Executive Commitment

Let's start with the most uncomfortable truth in agentic AI implementation: this cannot be an IT initiative. IT can support it. IT can enable it. But the strategic leadership has to come from the executive level, because agentic AI transformation touches organizational structure, competitive positioning, and operating model in ways that no IT leader has the organizational authority to drive alone.

What executive commitment actually looks like in practice is defining a concrete AI-first vision, not an aspirational one. The vision needs to specify how autonomous capabilities will reshape your competitive position, your customer value, and your operating model, with measurable outcomes attached. If you haven't yet developed an ROI-focused AI strategy, that's the right starting point before pursuing agentic transformation.

From there, you need to identify your Tier 1 Strategic Bets, the three to five high-impact use cases where agentic AI creates genuinely transformative value rather than incremental efficiency. The distinction matters because the instinct under budget pressure is to focus on cost reduction. But 10% cost savings won't build a competitive moat. The organizations pulling ahead are finding use cases that enable new business models or capabilities that simply weren't possible before.

Finally, you need to establish a cross-functional leadership team with real authority. Not an advisory committee. Not a working group. A team empowered to make architectural decisions, allocate resources, and override middle management resistance when it arises. Without that executive air cover, transformation gets killed by risk aversion before it reaches scale.

Pillar 2: Scalable Platform and Technical Enablement

Here's what most organizations discover too late: the architecture that runs your current business wasn't designed for autonomous agents. Traditional APIs provide data access, but they lack the contextual richness and dynamic interaction patterns that agentic systems require. Scaling agentic AI on a legacy architecture is like trying to run a modern manufacturing operation on nineteenth-century infrastructure. You can jury-rig something that works for a demonstration, but it won't hold at scale.

Agent-ready infrastructure means transitioning from request-response APIs to architectures that support continuous context sharing and bidirectional agent-system communication. It means adopting the Model Context Protocol (MCP), which allows agents to access and manipulate application context without requiring custom integrations for every system. And it means establishing Agent-to-Agent (A2A) communication standards so that your agents can coordinate across your technology ecosystem without breaking down.

At the center of all of this is an agent-mesh orchestration layer, a coordination infrastructure that handles agent discovery, secure communication and authentication, comprehensive telemetry and logging, resource allocation, conflict resolution, and version management. This is the connective tissue that makes a collection of individual agents behave like a coherent system.

None of this is cheap or fast. 2025 adoption data confirms that infrastructure investment is the top barrier for SMEs pursuing serious AI deployment. But skipping this foundation doesn't eliminate the cost. It just defers it to a point where you're paying for it under the worst possible circumstances, retrofitting architecture on deployed systems while trying to maintain business continuity.

Pillar 3: Building an Intelligent Data Ecosystem

There's a simple way to think about this pillar: agentic systems are only as intelligent as the data they can access. Autonomous intelligence built on poor data doesn't deliver autonomous efficiency. It delivers autonomous failure, at scale and speed.

The data architecture requirements for agentic AI go beyond what most organizations currently have in place. Agents need to find relevant information based on meaning, not just keywords, which requires transforming data into vector representations that enable semantic search across both structured and unstructured sources. They need multiple types of memory: episodic memory that records what happened and when, semantic memory that contains knowledge about your domain, policies, and relationships, and vector memory that enables similarity-based retrieval across all of it.

On top of that, Retrieval-Augmented Generation (RAG) is essential for grounding agent outputs in authoritative data sources rather than relying solely on what the model was trained to know. This is what reduces hallucinations and keeps agent responses accurate when they're operating in your specific domain context. And real-time data access matters, because agents making decisions on stale data make suboptimal decisions, sometimes consequentially so.

Underpinning all of this is data quality. It sounds unglamorous, but it's foundational. Organizations that rush to deploy agentic capabilities on top of inconsistent, poorly documented, siloed data discover quickly that sophistication in the AI layer doesn't compensate for problems in the data layer.

Pillar 4: Proactive Risk, Security, and Governance

Traditional governance, annual audits, manual policy enforcement, post-hoc reviews, was designed for a world where humans make decisions one at a time. Agentic systems make thousands of micro-decisions daily. Those two things are incompatible, and trying to apply traditional governance to autonomous systems doesn't make them safe. It just creates the illusion of oversight.

The governance transformation required for agentic AI has a few critical components. First, policy-as-code that encodes your constraints, ethical guardrails, and decision rules directly into agent frameworks rather than in PDF policy manuals, which the agents can't read. If you want agents to follow a rule, it’s best to make the rule machine-enforceable.

Second, real-time explainability. When agents make significant decisions, humans need to be able to understand why that decision was made, without having to review logs of every micro-decision. Systems that can surface human-readable rationales for autonomous actions make meaningful oversight possible at scale.

Third, comprehensive audit trails. When something goes wrong (and at some point it will), you need forensic-grade traceability of what the agent accessed, what it decided, and why. Building that logging infrastructure after a failure is too late.

Beyond these foundational elements, agentic systems also create governance challenges, which don't exist in traditional software environments. Agent sprawl, where specialized agents proliferate without central coordination, creates chaos. Autonomy drift, where agents optimize for immediate objectives at the expense of broader organizational goals, is subtle and hard to detect without ongoing monitoring. And adversarial exploitation, where bad actors manipulate agent decision logic through crafted inputs, creates a security surface that extends well beyond your traditional perimeter. For the security architecture specifically, implementing zero-trust principles and credential management for agent activities is not optional. See also our AI deployment security playbook for the foundational security measures that need to be in place before autonomous systems go live.

Pillar 5: Empowering Your Workforce for Human-Machine Collaboration

Agentic AI doesn't eliminate the need for human work. It changes what kind of human work is valuable. Routine execution gets handed to agents. What remains, and what becomes more important, is orchestration, oversight, exception handling, and the strategic application of domain expertise that agents can't replicate.

In practice, this means roles shift in predictable directions. People move from performing tasks to directing autonomous systems. From running processes to monitoring system health and intervening when something goes wrong. From domain execution to strategic application of domain expertise. These aren't minor adjustments. They require real capability development.

Specifically, the workforce capabilities that matter most in an agentic environment are understanding what agents can and can't do well enough to make smart decisions about what to delegate, effective prompt engineering for communicating clearly with language-based systems, the monitoring skills to recognize when autonomous operations need human intervention, and the exception handling ability to resolve edge cases that agents can't address on their own.

Training programs for these skills can't be one-time workshops. Agentic AI capabilities evolve rapidly, and the skills required to work alongside them evolve with them. Organizations that build ongoing development programs rather than point-in-time training end up with workforces that adapt as the technology matures, rather than constantly playing catch-up.

One strategic point worth naming directly: the people who can architect and orchestrate agentic systems are already scarce and becoming scarcer. Organizations that build this capability internally now gain a meaningful strategic advantage over those who will be competing for external expertise in an increasingly tight market.

Pillar 6: Sustained Change Management and Cultural Transformation

All five of the pillars above can be executed well and still fail to deliver transformative results if the cultural conditions for adoption aren't in place. Technology capability doesn't guarantee organizational adoption. Culture does.

The change management requirements for agentic AI are more demanding than for most technology initiatives, because the transformation touches on things people care deeply about: job security, professional identity, and organizational power. Dismissing these concerns as technophobia doesn't make them go away. It makes them go underground, where they're harder to address.

What works instead is proactive engagement with legitimate anxieties. Creating space for controlled pilots where failure produces learning rather than career consequences. Co-creating guardrails and operating models with the workforce rather than imposing them from above. People support what they helped build. Communicating transparently and regularly about transformation progress, wins, challenges, and what autonomous capabilities mean for different roles. And celebrating early wins in ways that show how agentic AI is enabling people to focus on more valuable work, not replacing them.

Executive authenticity matters here too. This is genuinely a paradigm shift without established playbooks, and leaders who acknowledge that uncertainty, who demonstrate humble experimentation rather than false confidence, build more trust than those who project certainty they don't have. When reality proves messier than the roadmap predicted, that trust is what keeps the transformation moving forward.

Why All 6 Pillars Have to Advance Together

It's tempting to think about these pillars sequentially, to get the strategy right first, then build the platform, then get the data in order, and so on. In practice, that approach creates compounding delays and leaves organizations perpetually "almost ready" to scale.

The pillars are interconnected in ways that make sequential implementation counterproductive. Strategy without a platform produces visions that can't be implemented. A platform without data creates infrastructure that processes garbage. Data without governance becomes a compliance and security liability. Governance without workforce development imposes constraints that people circumvent rather than follow. Workforce development without change management builds skills that people resist using. And change management without strategy lacks the direction and purpose that makes sustained effort possible.

Successful transformation, therefore, requires orchestrating progress across all six dimensions simultaneously. Not equally, and not on identical timelines, but all moving forward together, with executive leadership maintaining coherence as individual initiatives advance.

Your Agentic AI Implementation Action Plan

Based on everything covered across both parts, here's your implementation roadmap:

Months 1-3: Foundation and Assessment

Strategic clarity:

Secure CEO commitment and form executive transformation team,

Define AI-first vision with concrete, measurable outcomes, and

Identify 3 to 5 Tier 1 Strategic Bets focused on 10x value, not 10% savings.

Technical assessment:

Audit current architecture for agent-readiness gaps,

Evaluate data quality, accessibility, and real-time availability, and

Assess orchestration tooling landscape and vendor options.

Organizational readiness:

Map affected roles and workforce skill gaps,

Identify change champions and resistance sources, and

Establish baseline metrics for transformation tracking.

Months 4-9: Pilot and Prove

Controlled pilots:

Launch 2 to 3 high-value use cases with clear success criteria.

Implement comprehensive telemetry and logging from day one.

Document learnings on technical challenges and human adoption patterns.

Infrastructure development:

Begin agent-mesh orchestration layer implementation.

Establish A2A communication protocols.

Deploy initial policy-as-code frameworks.

Workforce preparation:

Start AI fluency training programs.

Redesign roles for pilot participants.

Create feedback channels for adoption challenges.

Months 10-18: Scale and Refine

Production deployment:

Scale successful pilots to production with governance frameworks.

Expand to additional use cases based on pilot learnings.

Implement error detection and graceful failure mechanisms.

Platform maturity:

Complete agent-ready infrastructure across priority systems.

Integrate RAG and memory architectures.

Establish real-time explainability capabilities.

Cultural transformation:

Celebrate wins and share success stories broadly.

Address adoption resistance based on feedback.

Expand training programs across the organization.

Months 18+: Optimize and Innovate

Continuous improvement:

Refine agent coordination based on operational data.

Expand autonomous capabilities to new domains.

Build proprietary competitive advantages through operational learning.

Strategic evolution:

Explore new business models enabled by autonomous operations.

Share industry leadership through thought leadership.

Attract top AI talent through cutting-edge implementations.

The Decisive Question for Every SME

Across both parts of this series, we've covered the what, the why, and now the how of agentic AI transformation.

Part 1 established what agentic AI is, why 10x value matters more than 10% savings, and where organizations can deploy autonomous systems for transformative advantage. This post has covered the five critical challenges that kill most implementations, the six-pillar roadmap for navigating them, and the action plan for turning strategic vision into operational reality.

The broader point is worth stating plainly. Agentic AI isn't a technology upgrade. It's an economic restructuring, one where the ability to deploy cognitive capital at scale becomes as fundamental as financial capital or human talent. For small businesses and SMEs especially, this is both an opportunity and a risk: the technology is now accessible enough that size is no longer the barrier, but only those who execute deliberately will benefit. Organizations approaching this as a larger version of their existing automation projects will find themselves competing against entities that have fundamentally reimagined their operating models around autonomous capabilities. The performance gap won't be incremental. It will be categorical.

What makes this genuinely urgent is that early movers are defining the standards, accumulating the operational learning, and building the competitive moats right now, while technology capabilities and talent availability still provide differentiation. Late movers will face markets shaped by agentic-native competitors operating at velocities they can't match through traditional approaches.

And yet, waiting for a proven playbook is also a trap. This is a paradigm shift occurring in real time. The competitive advantage belongs to organizations executing with strategic clarity and adapting as the technology and market mature, not to organizations waiting for certainty that isn't coming.

The question isn't whether your industry will undergo agentic transformation. It's whether your organization will lead it, or spend the next decade scrambling to catch up.

At Jones IT, we've helped hundreds of Bay Area businesses navigate exactly this kind of technology transformation. If you'd like to talk through what a technology roadmap looks like for your specific situation, reach out and schedule a free consultation.